Summary

Anthropic, a leading artificial intelligence company, recently faced a major data leak involving its Claude Code tool. The company accidentally released the full source code for the command line interface (CLI) version of the software. This happened because of a technical error in a recent software update that included a file meant only for internal use. While the actual AI models remain secure, the blueprints for how the tool functions are now available to the public and competitors.

Main Impact

The leak is a significant blow to Anthropic’s competitive advantage. Claude Code has become a popular tool for developers who want to use AI to help them write and fix computer programming code. By exposing the source code, Anthropic has essentially given away the "secret recipe" for how this specific tool works. Competitors can now study the code to see how Anthropic handles complex tasks, which could allow them to build similar tools much faster. Additionally, having the code public makes it easier for bad actors to find security weaknesses in the software.

Key Details

What Happened

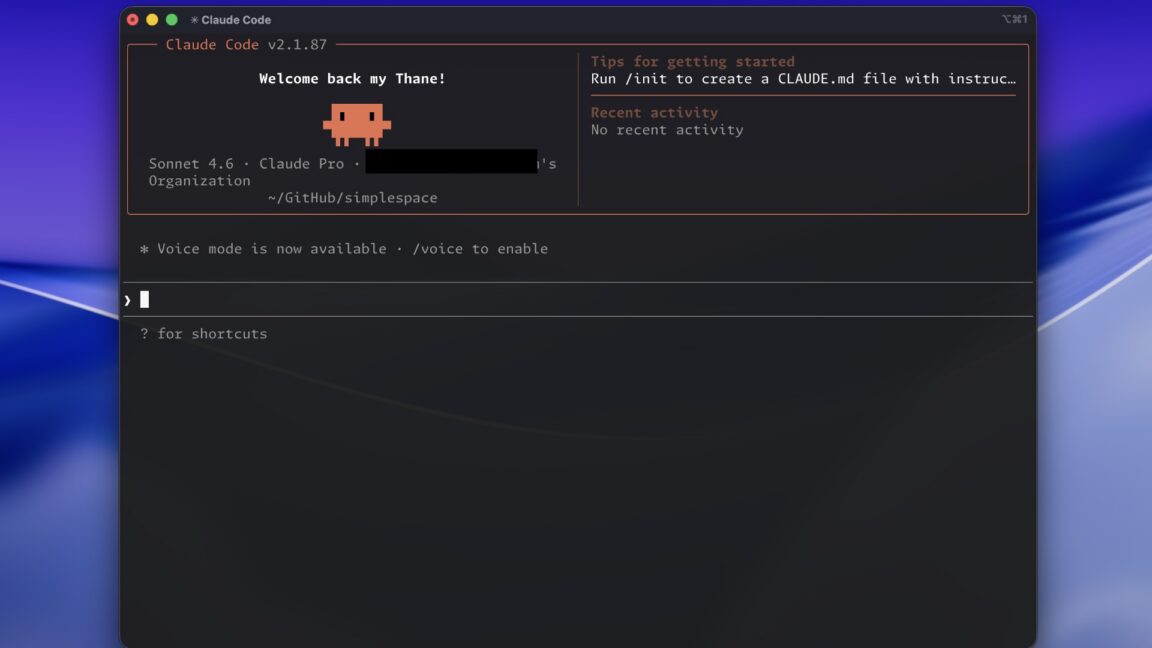

The leak occurred when Anthropic published version 2.1.88 of the Claude Code package to a public registry called npm. This registry is a place where developers go to download tools and code libraries. Usually, when a company shares software this way, they "minify" the code. This process makes the code run faster but also makes it impossible for humans to read. However, Anthropic accidentally included a "source map" file in this update. A source map is a special file that acts like a map, turning the scrambled, unreadable code back into the original, clear instructions written by the developers.

Important Numbers and Facts

Once the mistake was noticed, the impact spread rapidly across the internet. Security researcher Chaofan Shou was the first to report the error on social media. The leak is massive in scale, containing nearly 2,000 TypeScript files. TypeScript is a common language used to build large software projects. In total, more than 512,000 lines of code were exposed. Even though Anthropic tried to fix the mistake, the code had already been copied. It was uploaded to GitHub, a popular site for sharing code, where it has been "forked" or copied tens of thousands of times. This means the code is now permanently available on the internet, and Anthropic cannot fully delete it.

Background and Context

To understand why this matters, it helps to know what Claude Code does. It is a command line interface, which is a text-based way for humans to talk to a computer. Instead of clicking buttons, developers type commands. Claude Code connects a developer's computer directly to Anthropic's AI. It can read files, suggest changes, and even run tests to see if the code works. Because it is so powerful, it has helped Anthropic grow quickly in the tech industry. In the world of software, source code is considered a trade secret. It represents thousands of hours of work and millions of dollars in investment. Losing control of this code is one of the worst things that can happen to a software company.

Public or Industry Reaction

The tech community has reacted with a mix of shock and curiosity. Many developers are currently looking through the leaked files to see how Anthropic solved difficult engineering problems. Some have praised the quality of the code, while others are using it to learn how to build their own AI assistants. On the other hand, security experts are concerned. They warn that when source code is public, hackers can look for "exploits" or ways to break the software. There is also a lot of talk about how such a large and well-funded company could make such a simple mistake. It serves as a reminder that even the most advanced AI companies are run by humans who can make errors.

What This Means Going Forward

Anthropic will likely need to change its internal rules for how it releases software. They will probably add more automated checks to ensure that source maps are never included in public releases again. For the users of Claude Code, the tool will likely continue to work as normal, but they should be prepared for frequent updates as Anthropic tries to patch any security holes found in the leaked code. In the broader market, we might see other companies release similar tools very soon, using the ideas they gathered from this leak. The long-term damage to Anthropic’s reputation will depend on how they handle the situation and whether they can keep their more important AI models safe in the future.

Final Take

This event highlights the thin line between a successful software launch and a major corporate disaster. While the leak does not expose the actual AI "brains" that power Claude, it does reveal the complex machinery that allows those brains to interact with the real world. Anthropic now faces the difficult task of moving forward while their own blueprints are in the hands of everyone else. It is a tough lesson in the importance of basic digital security in the fast-moving age of artificial intelligence.

Frequently Asked Questions

Were the Claude AI models leaked?

No. The leak only included the source code for the Claude Code CLI tool. The actual AI models, which are the most valuable part of Anthropic's technology, remain private and secure on their servers.

What is a source map file?

A source map is a file that helps developers debug their code. It connects the compressed, unreadable version of the software that users run back to the original, readable code that the developers wrote.

Can Anthropic get the code back?

Once code is leaked and copied thousands of times on sites like GitHub, it is almost impossible to remove it from the internet. While they can ask sites to take it down, many people already have private copies on their own computers.